Howdy, Stranger!

It looks like you're new here. If you want to get involved, click one of these buttons!

Categories

YL2 character coloration - A first glimpse

Originally posted Jul 28 at 8:41am.

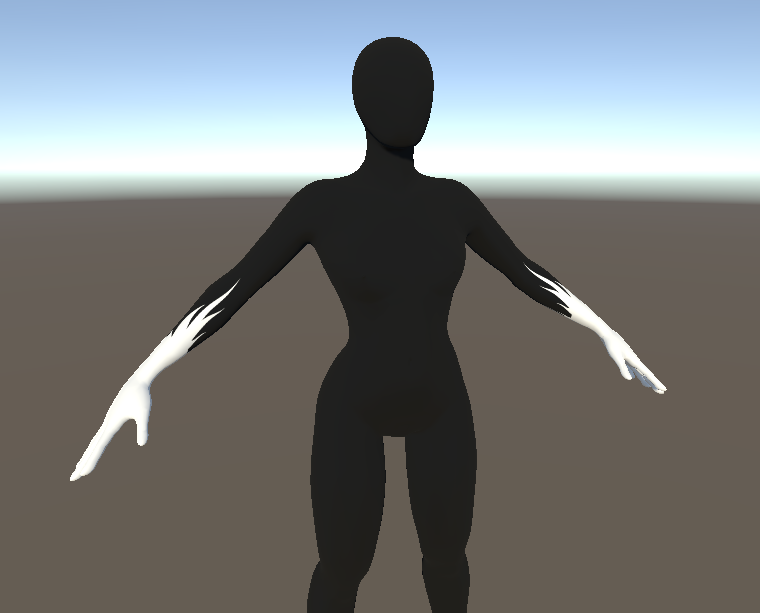

This past month we've laid out the foundations for a texture building system to be used in the upcoming character editor. With this system, we want to offer users tremendous freedom in how character can be colored and stylized. This is just one part of several we are implementing for stylization.

Texture builder - GPU powered texture generation

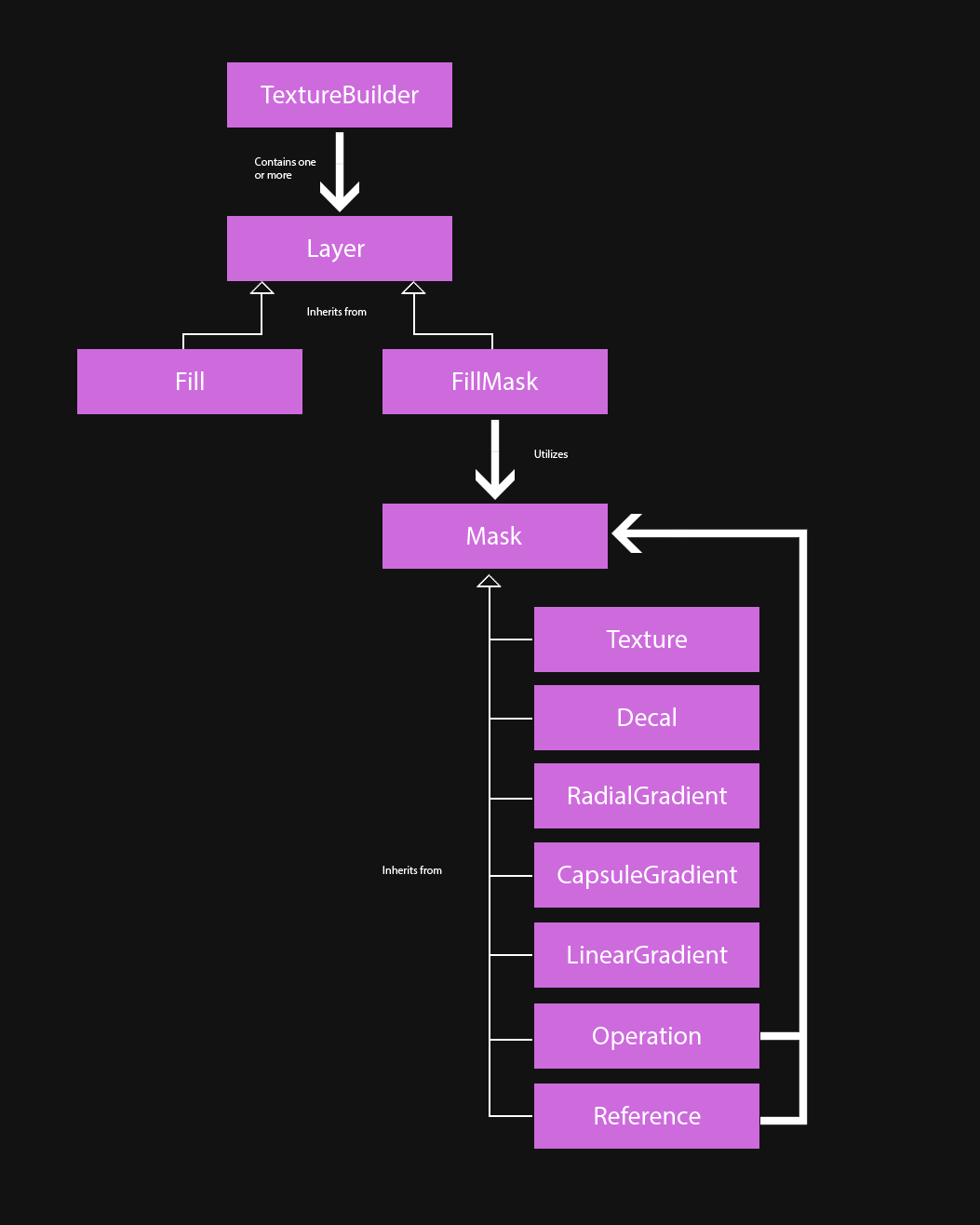

Texture builder is a custom system we have developed that generates textures from user input. The input consists of layers and masks, telling the builder how to fill each texture output. The texture builder has 4 different channels - albedo, emission, metallic and smoothness - and each layer writes into these channels. The resulting textures from a texture builder are used by the material for the character.

At the moment, there are two different types of layers - "Layer Fill" and "Layer Fill Mask". The fill layer is the most basic layer. It simply just fills each channel with the chosen input. The Fill mask layer takes a "Mask" as input when filling each channel (masks covered in more detail below). Both of these layers inherit from the base class "Layer", which offers options for enabling and disabling the layer as well as changing blend modes.

There is currently no limit to how many layers you can have in a texture builder. Each layer is performed on its own, and no more resources are allocated than are needed by a single layer at the time, so theoretically we could have millions of layers. Naturally it takes some time to process each layer, so we may impose an artificial limit so things don't get too extreme (maybe a 64 layer limit or something like that).

The idea is to allow a texture builder to be saved and loaded on any character. We are thinking that users will be able to share patterns by uploading them to the cloud, where they can be browsed and downloaded, much like how the interaction browsing works currently in Yiffalicious. Since a texture builder is basically just a step of instructions, it takes very little space and can be downloaded fast. Furthermore, they're fast to compute since the majority of all work is performed on the GPU.

Masks

Masks are single channel textures (grayscale, black to white). You can think of masks as selections on the 3D model. The "Fill Mask" layer utilizes these masks to fill a specific part of the model with a color (and/or emission/metalness/smoothness). To this end, we have so far implemented 7 different types of masks.

All masks inherit from the abstract class "Mask". This layer cannot be instanced, but offer functionality used by all sub classes.

Mask (Abstract base class)

Functions / Options:

Invert

Invert the mask. (Flip black and white colors).

Flip

Flip the resulting mask, so right side becomes left, and left side becomes right.

https://gfycat.com/ConsciousPaleBurro

Mirror

Mirror the mask, applying it on both sides.

https://gfycat.com/AdorableBitesizedIberianmole

Levels

Perform a photoshop-eque levels operation on the mask.

Mask texture

Mask texture is probably the most basic type of mask. It takes a predefined selection (authored texture) as input. The idea is to offer a vast amount of different selections, so you're able to fill specific parts of the body with ease, as well as find interesting patterns and combinations.

Inputs

Texture

Mask decal

Mask decal is similar to the Mask texture in the sense that it takes a texture as input. This texture is not a predefined selection, but rather an image that can be projected onto the mesh. You can of course tweak where this decal is projected, its size and rotation.

As other masks, this operation is performed on the GPU and thus is very fast. What you see below is texture generation performed in real time.

Inputs

Decal texture

Size

Location

Rotation

Two sided

Distance

Fade distance

https://gfycat.com/IndelibleWellinformedLark

Mask radial gradient

This mask takes a point, radius and blend parameter as inputs. The mask is filled from the point source towards its radius, according to the blend parameter. It's a spherical type of selection.

Inputs

Source

Radius

Blend

https://gfycat.com/CookedIllinformedIndianhare

Mask capsule gradient

The capsule gradient is similar to a radial gradient, but instead of originating from a single point, it originates from a line (defined by two points).

Inputs

Location 1

Location 2

Radius

Blend

https://gfycat.com/VagueEssentialDrafthorse

Mask linear gradient

The linear gradient is defined by two points. The first point means black, and the second one means white. Texture surface area in between the points will be blended from black to white, depending on where it is on the virtual line defined by the two points. Surface area beyond the points will be either completely white or black, depending on which end it is.

Inputs

Location 1

Location 2

https://gfycat.com/BoldSimpleBarb

Mask operation

The mask operation mask is probably one of the most interesting ones. Instead of defining a mask itself, it takes two existing masks as input and performs a blend operation on them. It offers the same 18 blend modes supported by layers. Furthermore, because a Mask operation inherits from "Mask", it can be used as input to another Mask operation! This allows for some really interesting opportunities.

Inputs

Mask 1

Mask 2

Blend operation (Replace (Normal), Linear_Dodge (Add), Subtract, Multiply, Darken, Lighten, Color_Burn, Linear_Burn, Screen, Color_Dodge, Overlay, Soft_Light, Hard_Light, Vivid_Light, Linear_Light, Pin_Light, Difference, Exclusion)

MaskTexture and LinearGradient combined (multiplied) in a MaskOperation to create a gradually increasing emission (FillMask layer).

Mask reference

A mask reference doesn't really define a mask itself, but rather references an existing mask. So if you have created a mask elsewhere and want to reuse it in another layer, you can use this mask.

Summary

With the texture builder we want to create a system that is fast, bandwidth efficient and featured, enabling you to create the style for your characters that you want. The idea is to allow for these texture builder recipes to be saved and shared in the cloud, much like how interactions work today.

There's still much left to be done. Currently, there's no interface for all of this, just the code backend. We haven't decided 100% yet how the interface will work. We have been considering implementing a node-based system, but fear such a system might come off as too intimidating for some and perhaps too time-consuming to implement. Perhaps a more traditional interface with photoshop-like layers and options is preferable.

In any case, we are very excited about this system, and can't wait to see what you will create using it!