Howdy, Stranger!

It looks like you're new here. If you want to get involved, click one of these buttons!

Categories

Exciting news

Originally posted Sep 28 at 2:41pm.

Hello everyone!

New month, new update.

I feel this month has been one of our more productive ones. We've covered so much ground - both gaining deep understanding of many of the challenges we're faced with, and also implementing solutions to several of them. It really feels like we've reached a breaking point in our development, where the biggest uncertainties have been resolved, and thus we are finally ready to proceed to implement the actual character editor itself given these new solutions.

In last month's update, we teased a new feature we have been developing. Now that we've had more time to explore and polish this new technology, we're pleased to finally share it with you.

Before we dive into all this, I must point out that everything posted here is WIP, and does not represent the quality of the final product. All material has been taken from tests designed to verify that our technology works as expected, or directly from 3D authoring software (i.e. not the app).

Adaptive rig - artistic freedom

The rig of a character is basically, to put it bluntly, its skeleton. Naturally, you need a skeleton in order to animate a character, as it is the bones of the skeleton that decide how exactly the mesh should be deformed.

Unfortunately, once you have a skeleton in a mesh, it also means you're limited by it in a sense. Because if you, for example, change the shape of the mesh in such a way that it no longer matches up with its skeleton, then you wouldn't be able to use that rig anymore. This means people's creativity in creating shapes for a character is limited by the constraints set by the rig, since you cannot go outside of the rig's bounds. One might imagine that a solution to this problem, then, could be to move or even scale the actual bones of the rig and utilize their vertex binding to create a new mesh shape through their transformations. However, this technique lacks artistic precision. The results rarely turn out the way you want. Furthermore, the farther away you move the bones from their original location, the higher the risk is of undesired deformation and mesh stretching.

These past months, we've been experimenting with a new custom technology that we simply like to call "adaptive rig". It's something we have researched and developed ourselves. With this technology, we are no longer bound to the limitations of a rig. We can create virtually any shape we like for a mesh, and its rig will automatically adapt to this new shape as it is applied. This gives us tremendous freedom in our mesh shape authoring, as we don't need to be concerned about the rig.

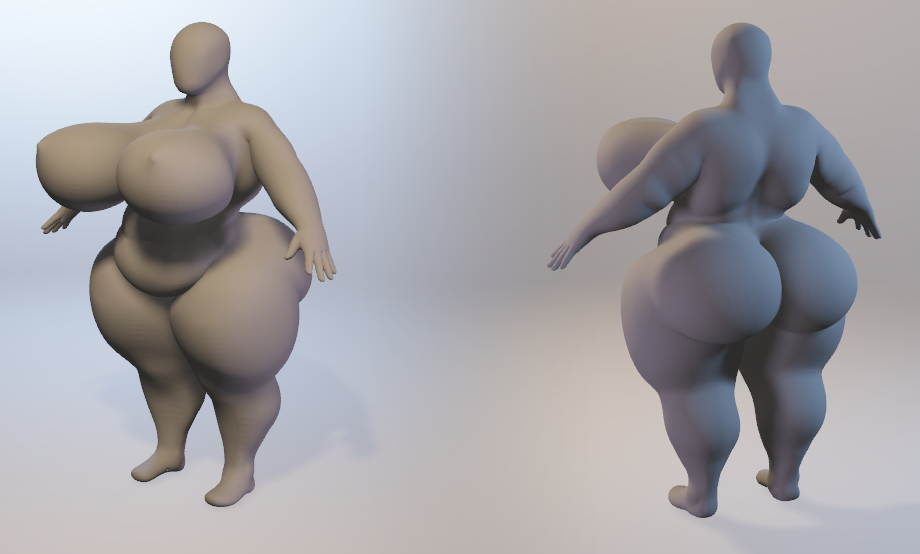

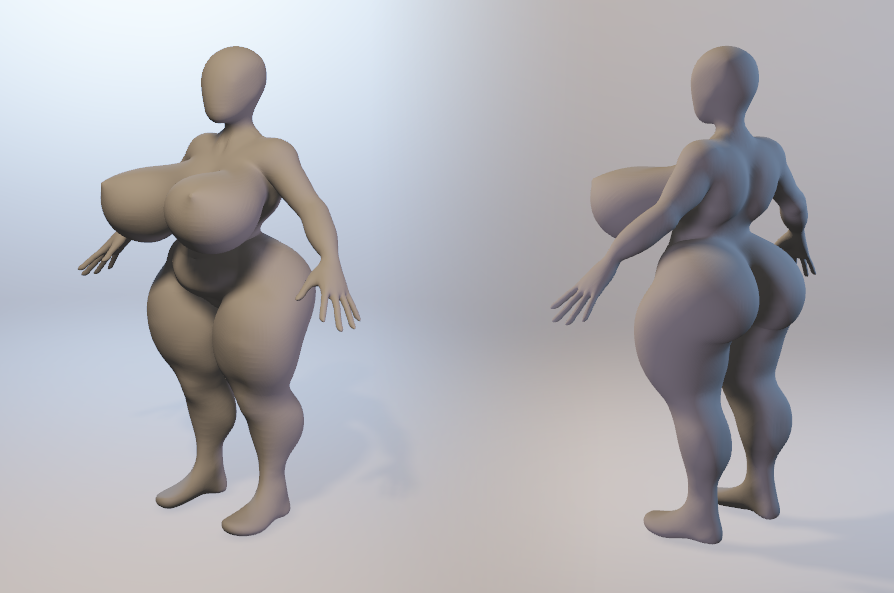

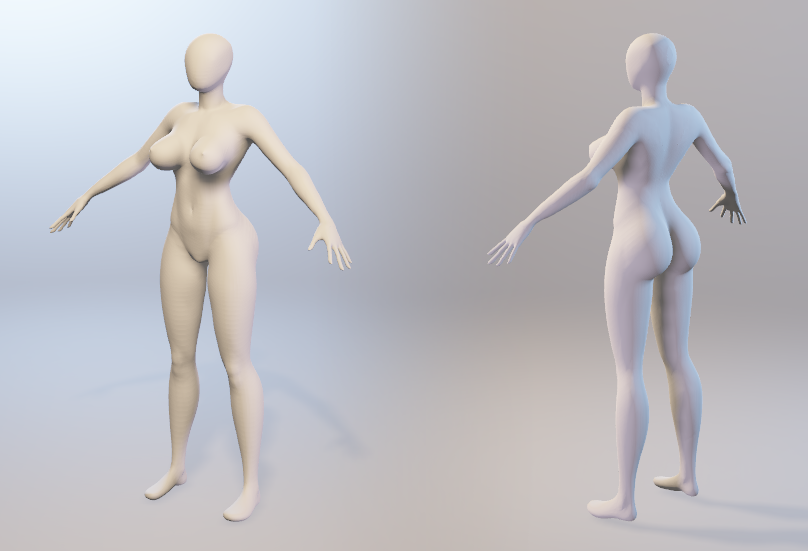

So what does this mean in practice? Well, it means our character creator will go beyond what many others offer. Since we are no longer limited by the rig, we can grow our mesh to hyper sizes (muscular/chubby), shrink it to short-stack sizes, and obviously do everything in between. It's all performed on our universal mesh with the help of adaptive rig.

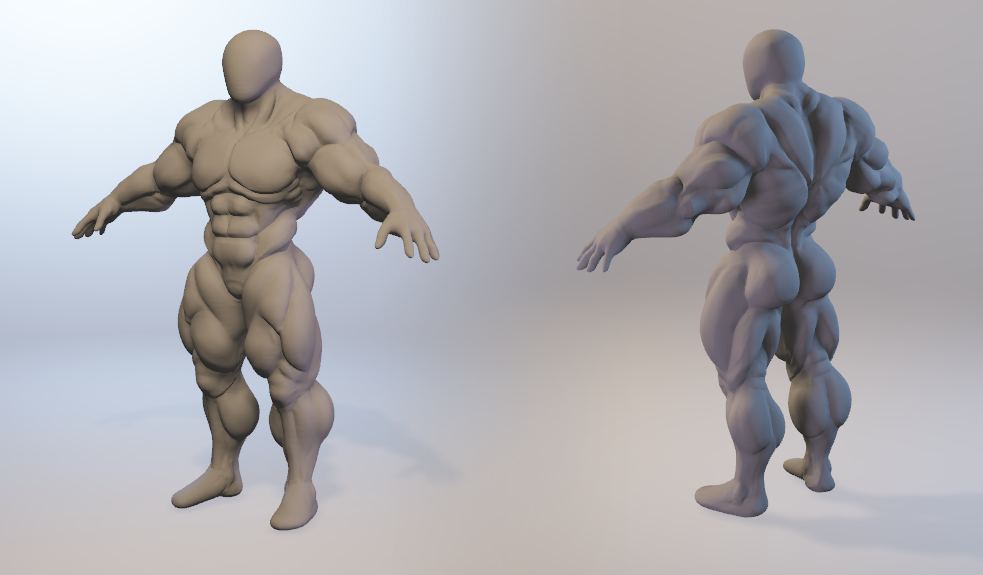

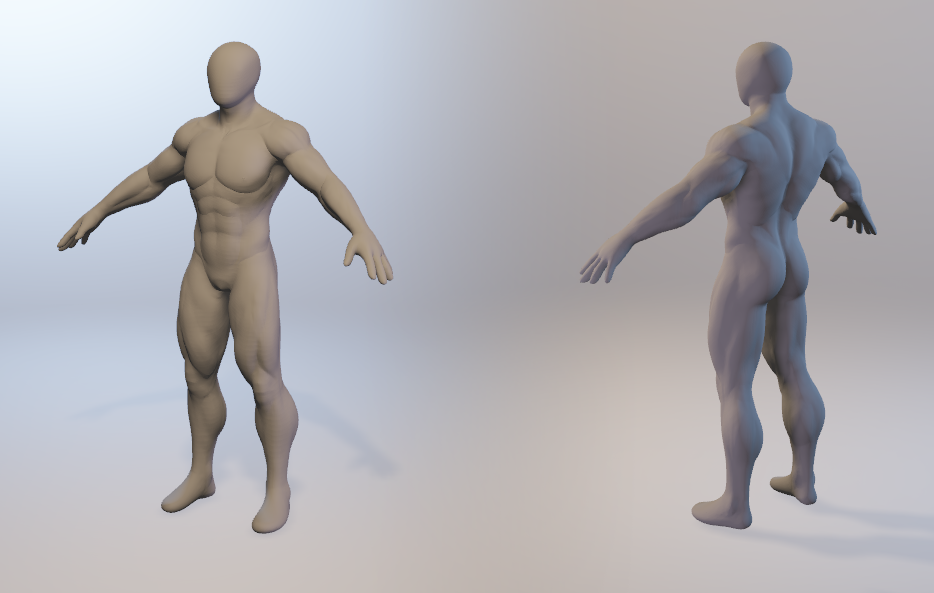

Here's a short demonstration of a hyper muscular shape applied to our universal mesh:

https://gyazo.com/c676b8087733eea928add6b9034ed086

As you can see here, the mesh moves far away from its original rig, but because of our adaptive rig technology, it's not a concern. We are fully free to implement any shape we like.*

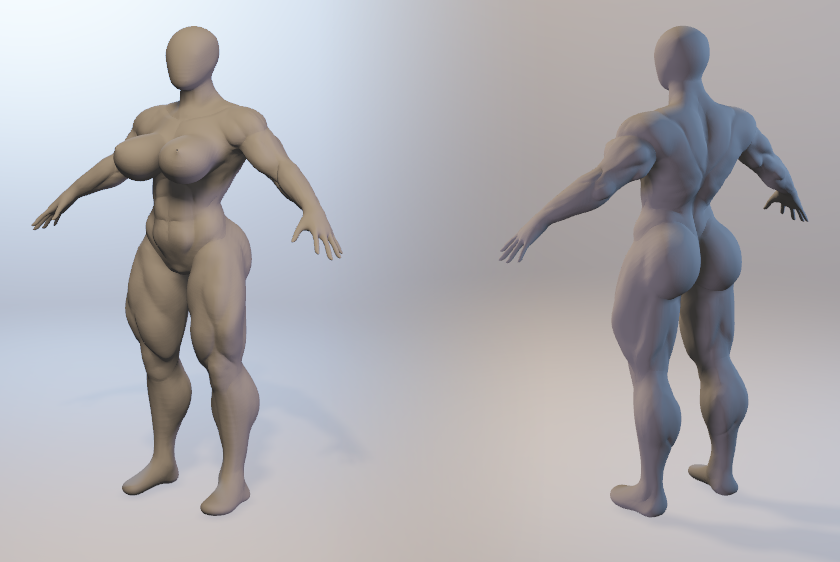

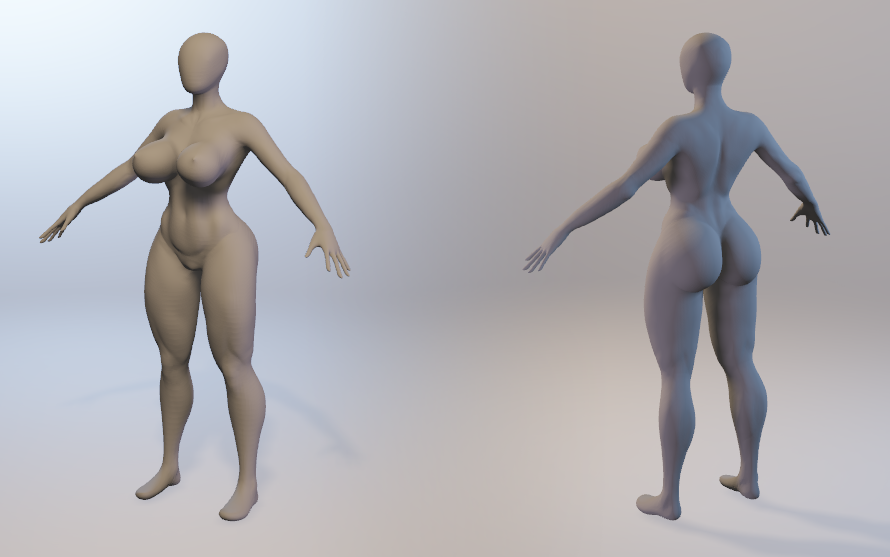

Here are sculpts we've been working on with this adaptive rig technology in mind. While not yet implemented, it still shows just how versatile we're aiming our character creator to be.

So as you might imagine, this adaptive rig technology is an extraordinarily powerful tool to have in regards to a character creator. We hope you're as excited about it as we are!

* I should point out, that while the adaptive rig technology could be used for virtually any shape, any size, there are other practical limits we need to consider. We cannot grow a mesh to godzilla sizes, because its relative resolution to smaller meshes would become too great, not to mention the physical interactions that would not work so well with such a vast difference in size.

Texture shapes - Fast mass texture processing

There's actually more going on in the animated image above than you might first realize. As the mesh is transformed, we're blending in different normal and occlusion maps depending on where in the growth curve the character is. Here's images showing what the mesh transformation looks like without maps being blended in:

https://gyazo.com/89073087b2f1e0f802cbd117a84c240a

https://gyazo.com/218c9b54e3512c5e9071dbe56a8b2451

The maps make a huge difference in the character's appearance. However, using maps together with a shape like this posed a problem for us. If we are to author several dozens if not hundreds of shapes to be used in the character creator, and if each shape need its own pair of normal and occlusion maps, then the amount of textures would rise above the allowed texture limit a shader may have (16). Furthermore, in order to render objects efficiently, you want as few texture look ups in your shaders as possible, so even if we could use more than 16 textures it would be undesirable from a performance perspective.

In order to solve this problem, we created a system we call "TextureShapes". This system works outside of the rendering pipeline, and by utilizing GPU we are able to blend hundreds of textures together efficiently. What's so great about this system is that it's virtually a 1:1 mapping to blend shapes, making it simple to understand and author maps for. Through it, we can pair each blend shape with its own set of textures, offering us enormous freedom in our shape and texture authoring, as we don't need to worry about exhausting any texture limitations that exist in shaders.

GPU Normal processing

But wait! There's more! Yet another thing that might not be apparent in the pictures above is that a third system is present. A mesh consists of different types of data that is required when rendering the object. In addition to the points of the mesh (the vertices), and how they're connected (triangles), "normals" is another form of data that is provided. Essentially, the normals decide the direction of each surface point on the mesh, so the shader can perform lighting calculations on it. Thought normal calculation was handled automatically? Wrong!

Here's what the mesh looks like if not doing anything to the normals (i.e. default unity behavior):

https://gyazo.com/d2ff4afa1aee145db5423333cf9d8bca

https://gyazo.com/e8f5fb1dbbad06b83cc7d1105bc55516

As you can see, the more the mesh grows, the weirder the surface looks. That's because the normals from its base shape are used in its hyper form, which are clearly not adapted for it.

Solving this problem is simple enough. The equation for calculating a vertex normal is uncomplicated - calculate all the triangle normals that this vertex is connected to, get the average of those and normalize it. However, if you need to go through ~30,000 normals each frame and recalculate them, it's going to affect the performance... As a matter of fact, performing such a calculation can take around 60 ms! (FYI, if you're targeting 60 frames per second, you have a 12.5 ms budget per frame).

Well, no problem. We can always just perform this task in a separate thread, thus not affect the main thread's execution or cause any slowdowns. However, while the task may not slow anything down, there's still noticeable lag between affecting the shape of the mesh, and its normals being updated:

https://gyazo.com/22504779bb07a09d2a283ec0dd464faf

We were not happy about this. In Yiffalicious (1), we had the luxury of either pre-calculating the normals, or just calculating a small amount of them, meaning this process never took that amount of time. But this time around, literally the whole mesh can change and thus invalidate all of its normals, requiring a normal recalculation for the whole mesh.

In order to fix this, we have developed a new custom system that recalculates all the normals on the GPU. By using the GPU, we were able to reduce these 60 ms to less than 1 ms!

https://gyazo.com/fa95b5f9fa8ee3932a3d662e390f830f

Soft body system cont.

Some of you might recognize this. A couple of months ago, we showed off a soft body system. In that demonstration, we only used 2 objects. Well, we had some time to look into this technology again, and have developed it a bit further. What you see below is the same system adapted to work on several simultaneous intersections.

https://gfycat.com/InconsequentialFrigidAsianpiedstarling

It looks promising, but more testing is still required to verify its viability. It's one thing to get this tech to work on simple geometry (such as these objects), and quite another beast altogether getting it to work on complex dynamic geometry (such as characters). We have a couple of ideas how it could be achieved, but as we said, more testing is still required. Hopefully we'll be able to solve it.

Soft bodies would be such a nice addition to YL2, especially considering inflation and just how big characters can get in general this time around. I think soft body deformation is one of the more exciting technologies we're experimenting with. I really, really want to get it into YL2, and I promise you I'll do everything I can to realize this idea.

From what I've learned so far through our testing, it seems like this technology will require a fairly modern DX11 GPU (as in, mid-range gaming GPU within the last 5 years, i.e. GeForce 660 or equivalent) if we are to get it to work on characters. I was a bit reluctant at first to commit to something that will require such hardware, but I think soft bodies are just too damn exciting to not pursue even if it means only supporting newer hardware.

What do you think?

Summary

This past month we've been working on several different technologies required to realize our vision of a versatile character editor. These technologies include automatic rig adaptation, mass texture processing and GPU powered mesh normal recalculation. All of these were crucial steps that had to be taken, and now that we have these, we are starting to feel ready diving into building the actual character editor itself.

We've also been experimenting with various other things. Among those is a soft body system, that we showed off some time ago and now had the time to look into a bit further. While more testing is still needed, we are hopeful a soft body system will be part of YL2 at some point.

- odes

Comments

Exciting news? THIS IS AMAZING NEWS! This opens up a MASSIVE AMOUNT of new possibilities for scenes! Right now, Fraenir is the only real bodybuilder character in the game, but with this; Hell! You can make even the cat be a muscle God if you feel like it! I can't wait to play around with this new feature!

...please don't be too long DX