Howdy, Stranger!

It looks like you're new here. If you want to get involved, click one of these buttons!

Categories

YL2 update Oct 2018

We hope you're doing great!

This month we've continued working on the appendage systems - adding new tools but also adding a new type of appendage group. This new group supports custom made models, so we think that's pretty exciting! We've also been working on new body types. But first, let's jump into the new tools.

Tools

When we first showed off the fluff authoring system (last month), we only had two types of tools - one for adding fluff and one for tweaking it with a brush. Now we've implemented other ones that were selectable (but not defined), as well as new ones too. We've also organized the tools in a different manner that we think makes more sense, and implemented a shortcut system for quick and efficient access.

(Tools are organized as buttons, and are also accessible through keyboard shortcuts.)

(Removal tool. Hovered instances are highlighted. As with all tools, undo and redo is supported.)

https://gyazo.com/716b615c277785ce240abaa403b4e47c

The removal tool was actually a bit tricker to get going that one might think. Since all instances are part of the same object and mesh (to reduce draw calls and increase performance), it means we couldn't rely on regular object identification to single out an instance. Instead, we had to find some way to identify each individual strand within the mesh. The way we ended up solving this was by providing additional data through the mesh's UVs, that we could use together with a triangle index to retrieve the instance.

The highlight is achieved through the same identification. In the shader, we provide an index of what instance should be currently highlighted, and since the mesh is fed with data of what instance each vertex and face belongs to, it's as simple as comparing that data against the provided target index.

Another problem we faced was that preparing a mesh for raycasting is a very heavy operation to do. Luckily, we have implemented our own custom raycaster (that we've showed off before) that doesn't require any baking to perform raycasting. By using our own raycaster, we could quickly perform raycasts against the fluff mesh instance to find an intersecting triangle and thus a triangle index and fluff instance through baked mesh data.

(Combing tool. Simply press and hold mouse and move cursor in a direction you want the instances to be. The strength of the combing is decided by the length between mouse down and current cursor location.)

https://gyazo.com/305f6a616f954ae8c3fe5fe627cbab31

(Move instances.)

https://gyazo.com/004123befa6af384d536c33381637d84

Naturally, moving and combing can also be done on a per-instance basis:

(Adjusting individual instances. Using keyboard shortcuts to access specific tools quickly and easily.)

https://gyazo.com/71595dbcd4aea41c4c8e16c6fc9c619f

As with all things in YL2, we develop everything in an as abstract and generic fashion as possible, meaning no hard-coding and maximized code reuse. Initially, it takes a bit longer to develop in this way, but with time, it pays off greatly.

Custom content import

While we will try to offer many different types of masks and embellishments as possible, we also want to enable you to import your own custom content. For this purpose, we have created 2 different types of importers - texture importer and model importer.

Texture importer

The texture importer takes an image path as input, and then reads each individual color channel in that input and creates single channel textures from them. You can think of masks as a selection on the surface of a model, used for filling it with color, among other things.

To import a texture, simply drag and drop a png file in the window, or goto Import -> Texture and browse to you image.

https://gyazo.com/e22858d9944e9e58cd07ac77d859fbb1

A window will appear, giving you the opportunity to select what channels to import, and also their names.

If you go ahead and import the channels, a new item will be created in CharacterBuilder > LocalAssets for each selected channel:

https://gyazo.com/d23fa282910da9dac6c4da5db43484eb

You can also decide to import the image as a map instead. Unlike masks, a map is a full color texture and reside in a single LocalAsset item:

https://gyazo.com/4aef4af37b128a2c9ba9a57d8ed62d5c

A LocalAsset doesn't do anything until it is referenced somewhere. Depending on what type of local asset it is (mask, map or model), it can only be referenced from specific types of objects. For example, a FillMaskLayer can only reference masks, while the eye options can only reference a map.

Here, we're referencing the newly imported map in eye options:

https://gyazo.com/cd29078e7a15b25dd6d6df67047a373b

If the "Reload" option is ticked in the LocalAsset, it will automatically be updated if the source file on disk is changed:

https://gyazo.com/afe9fd9548eef5a1e9612ac944b2b64a

Model importer

The model importer reads .obj files. To import a model, simply drag and drop an .obj file or browse to one through the Import > Model command.

https://gyazo.com/1cb23b0e1d3b843049b5a2040a707b91

There is currently no preview window for models.

An imported model can either be used in a "Part" or "Appendage model group", which brings us to our next topic.

Appendage model group

This is a new appendage group we've implemented this month. Unlike the fluff appendage group, this one references a model, which is repeated for each instance within the group.

To add a new appendage model group, go to appendages and click the plus icon and select "Create model group". Then, in the appendage group attributes, reference a model and simply start adding instances!

(Example of adding model instances. As you can see, you configure the rotation and scale of the items by clicking and dragging the mouse.)

https://gyazo.com/f65da38587674fff53a79ea1f7b1a9a2

Naturally, we have implemented our tools in such an abstract way that they can be applied to this type of group as well.

https://gyazo.com/0579c8fd85cfd7ffd18793442a846f42

(The brush tool is missing here because it needs some specific configuration to work with different types of appendage groups. We simply haven't gotten to it yet for the model appendage group, but the code itself works with any group.)

https://gyazo.com/332109b8705f080e0ef5c1676211b1dd

As with images, if the source model asset is changed (and if the "Reload" checkbox is ticked in the LocalAsset object), the model will be updated automatically:

https://gyazo.com/3af7c3e7ebe8a1d0cfe29a0179de146a

You can also change the referenced asset to update all the instances:

https://gyazo.com/e0492278edaab804b2ee564699c28c3f

Parts

While custom models will be possible to reference in "parts", we have not fully implemented the part system yet and thus can't show it yet.

What's the difference between parts and appendages?

A part is a single model that can be added to a character. It has its own texture building system, can have its own appendage groups applied, and will also be possible to affect during interactions. For example, a custom ear instance would have to be added as a part to a model which is then possible to transform in an interaction. Parts can also be automatically mirrored, so you only need to add it to one side.

An appendage group on the other hand is a group of several instances. They can't be affected in an interaction (they're static), they don't have their own texture builders (texturing will work differently for them), and they can't have their own appendage groups.

Texture builder

Just to rehash (it was a while ago since we covered the texture building system) - the texture builder is a system that uses layers to create different texture outputs. In YL2, a texture builder will produce an albedo, emission and metal/smoothness textures which are used by the character. Each layer has its own properties and references. For example, you can create a mask that selects the lower arm area with a gradient (think foxes), and then reference that mask in a layer to fill the albedo texture with color for that selection.

The purpose of the texture building system is to offer a way to generate texture procedurally, which makes them very efficient to store (takes almost no space). You will of course still be able to texture things "normally" too, but beware - textures are heavy assets and there will be a final size limit. Combine custom textures with procedural layers to achieve the look you want while keeping file sizes low!

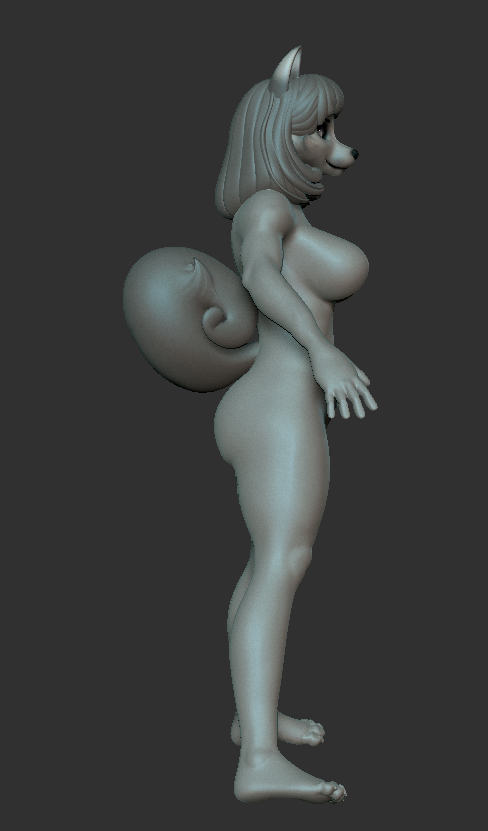

Dogson stuff

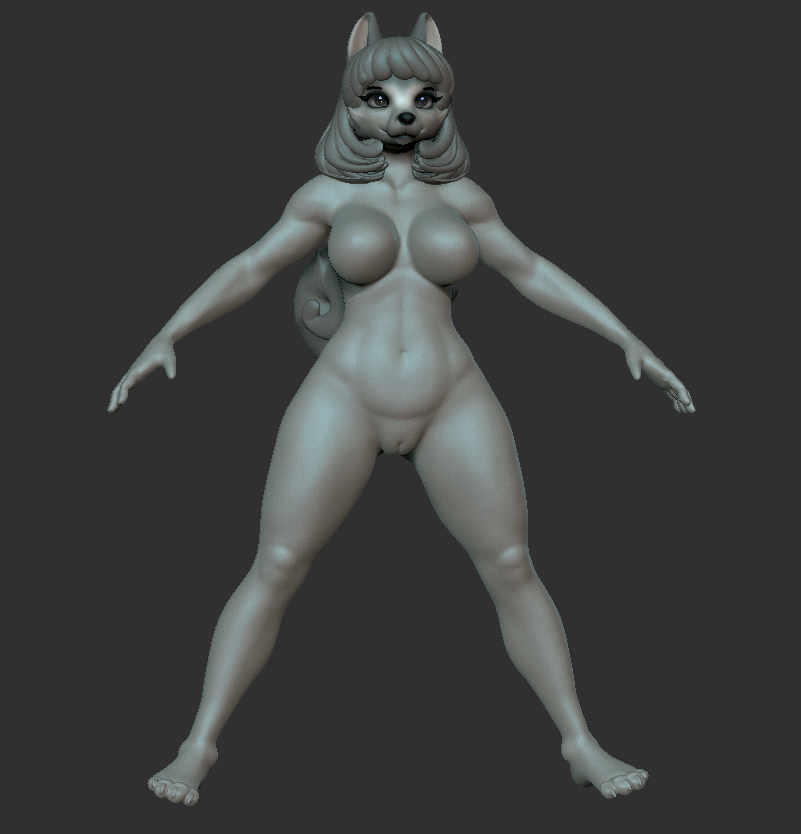

Dogson has continued working on different body types together with Nomistrav. Last month we showed off a male shape, so naturally this month we've been working on a female shape:

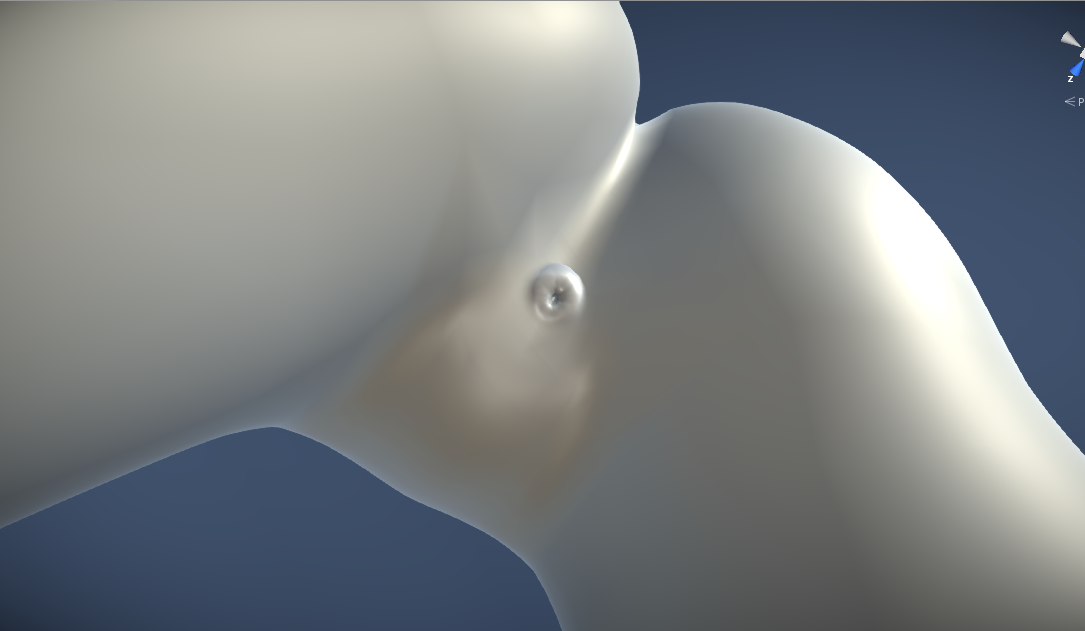

We've also made some changes to how we are implementing our models. Previously, the anus used to be "hard coded" into the model, since that was how we used to do things in Yiffalicious (1). However, we realized we could take advantage of our mesh blending tech and separate the anus from the body, which would not only make the anus exchangeable (and thus more customizable), but also make the authoring process easier for us.

Here's an image showing how the blend tech works with the anus:

And just to prove it's actually a separate model:

We're also making changes to how character materials and UVs are organized, to better streamline our authoring process. We're not fully done with this yet and it's something we'll have to continue working on this new month.

2018 release realism

The end of 2018 is approaching fast, and truth be told, I don't know if we're gonna make it. An early release of YL2's character editor before the end of the year is not completely out of the picture just yet, and theoretically it's still possible. However, I know that from a practical viewpoint, finishing those last % is always tougher than you think.

There are many reasons why the development of the character editor is taking so long, but if I were to try and sum it up, it would be that (aside from the complexity and ambition with the project) in order to arrive at the strong foundation we're going for, it takes a lot of iterations. When we start working on a new feature or new content, we think carefully about it at every step of the way, yet during its implementation, new problems pop up or new realizations are made that require us to reassess our situation if we are to make something that will last. Each time this happens, the foundation gets a little bit better and stronger, but at the cost of time. I'm confident that in the end, this time will prove to have been well invested. Our framework and organization of content is so much better and more dynamic now than it was when we first started.

So with all that said, what is actually left to do?

Part system

This is already started, but more work is required to finish it and making it compatible with the appendage and texture building systems.

"States" for texture builder

Essentially, when you click an object in the YL2 editor, the editor can transition into a new state tailored for that object. An example of this is the fluff authoring system, which is transitioned into when you click a fluff group. Likewise, we need to create these types of states for the different objects used in the texture building system to ease the authoring.

Content

We need to finish the new content (models, maps and shapes) and get it into the editor. The content currently used in the editor is ooold - something we created to have something to test on.

Shaders

Most if not all of the problems we have encountered regarding shading are already solved (seamless tech, areola & nipple projection, read mesh from compute shader data, general shading), but we don't have a final character shader containing all these solutions yet. It's mostly a matter of putting them all together rather than inventing something new.

Shape layer system

This is something that we've practically already finished, but we had to put it on hold until we got more final looking content and shapes. We're reaching that stage where this content is getting ready, so we can finally start testing the system. Naturally, it's likely some tweaking will be required and there may also be unexpected problems.

Saving & loading

This is something we've designed the app with in mind from the very start. We've been diligent in making each and every new object that we implement support these tasks. However, we have yet to have tested saving and loading of complex hierarchies (such as a character), so that's definitely something that's going to take some time. How much time depends on how many problems we run into.

Default content & samples

In addition to offering different types of parts (heads, hands, feet etc) and body types, we also want to offer pre-made masks and appendages that you can use. Furthermore, we intend to create sample characters and content that you can learn from when creating your own. We think providing sample content is very important. The best way to learn is by example!

Posing & simulation

We really want to make it possible to pose the characters you create and run them together with simulation so you can get a better feel for how they look and behave. Otherwise they'd just stand static in a T-pose modeled in zero-gravity, which means tits standing out and stuff like that!

Polish

Naturally, even when we've made all these things, we will still have to polish the final result.

So as you can see, there's a lot left to do, and trying to squeeze all of it into two months will be tough - especially considering how many mandatory social gatherings there are around the holiday season.

Summary

We're still chugging along, implementing new features and content. This month we've spent time adding more tools to the appendage systems and we've also added a new type of appendage group that supports custom models. Dogson has been working on new body types, and we've made some changes to how the models will be setup to ease our authoring process while offering you more customization.

There's still plenty of things left to do, so while we're happy about the progress, it remains to be seen if we'll manage to get something out before the end of the year. We're getting really close now though. A release is not far away.

Comments

Yeah, i dont think i have the patience of a superhuman. Imma get old first before anything would be done with YL2. I guess the next generation got something to have fun with. lol

Me, personally, i gave up hope for this thing to finish, if ever. Imma be real busy with real life afterwards that i wont have time for "this" anymore, sadly.

Bye bye, peeps. Im abandoning ship, lul. (Not like its gonna matter since i aint no patron

Good luck with your future progress. @Odes & Crews

[I'll still be lurking for skins and whatnot in the forums tho...lol]

I dont know. Can you tell? :ppp

Man, i need to stop taking coffees.

The blend technique works well for small things and things that have uniform skinning. The female genital area is much more complicated, so this technique would not be suitable for it. We do however intend to make female genitals configurable in some ways, but that may be added later on.

Yes.

I ask because I've got some wild ideas for yl2 that would make use of this kind of customizability and I'd love to know what could be possible?

Naturally, you can use other people's characters in your interactions. Data-wise, the characters will only be referenced in the interaction file though, not saved along with it (to avoid redundancy).

@fudmo

Essentially any scale we feel like implementing, since body types exist independent of skeleton and rig. For starters though, we will offer a few different body type variants that can be combined with a tall or short base shape. Bones will probably be possible to scale to some degree as well.